Visualizing TCPĀcongestion avoidance algorithm

Marc Herbert

INRIA / Sun Labs Europe

| Revision History | ||

|---|---|---|

| Revision $Revision: 1.5 $ | $Date: 2002/10/25 15:24:41 $ | |

Introduction

Maybe you ever wonder what happened when a big server connected to the internet with a fat pipe transfers a big file to you, sitting behind a tiny modem connection.

The naive thing the server could do, would be to split the file into IP packets and send them all at full speed. Since the modem link has a low maximum throughput, these packets would have to be stored somewhere in between: in IP routers. But routers are designed to transfer packets, not to provide (costly) storage. When they fill up ("congestion"), they simply drop packets! Sending packets to see them dropped is not clever at all. Here comes TCP congestion avoidance.

TCP and TCP congestion avoidance in particular are hot topics, you can find a whole lot of information about all this. I saw many (more or less boring :-) articles about TCP; most papers go very much into details, trying to explain complex issues (TCP is far from solving all problems). I wanted to see a very short, simple and "live" example of the TCP congestion algorithm, with pictures, so I set up this experiment, and found the results nice enough to share, hopefully I am right.

Overview

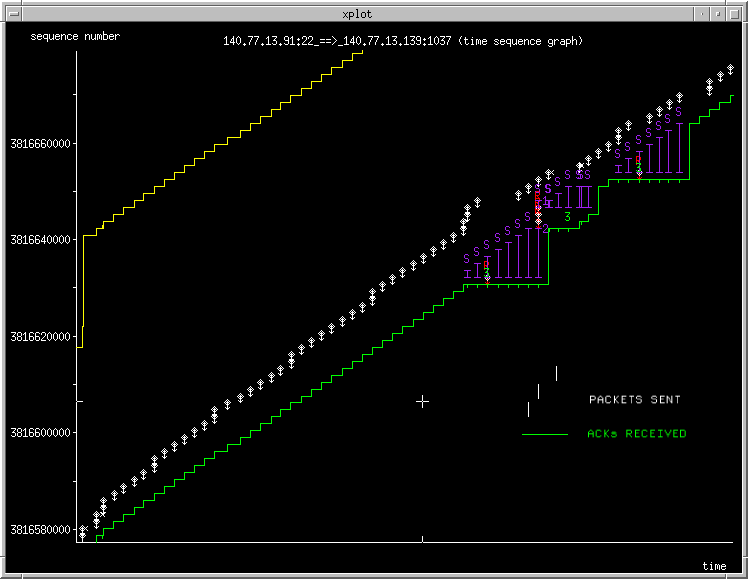

The aim of the experiment is to see how packets are flowing when a server with a fat pipe (10Mb/s) regulates its sending rate to cope with a slow receiver (0.1Mb/s). Let's start by a broad view (Figure 1). Oh, by the way, all the plots are bitmap images, which is a pity, since you can not zoom by yourself. I have tried to use some vectorial formats (EPS, Flash, SVG,...) but none of them fills the need for a decent toolbox together with good browser support. If you are really interested in trace details, you can download the trace in the original xplot format , or in this gnuplot-converted format.

This trace is built using tcpdump, tcptrace, and xplot. The X axis is time. The YĀaxis is the file to transfer. Each tiny white vertical dash is a part of the file, i.e., a TCP/IP packet. The green line is the amount of acknowledged data by the receiver so far. Events are timed on the sender. The vertical distance between the green line and the white dashes is the amount of packets "somewhere in the network", from the server point of view: they are the packets sent but not yet acknowledged by the receiver. Red dashes are packets transmitted a second time (well... at least second). You don't need to understand yellow and purple signs for the moment. If you really want, go and read the Time sequence tcptrace documentation.

Apart from the complex beginning that we skip, you can see a repetitive pattern on this picture: the emitter sends white packets at a slightly faster rate that it sees green acknowledgements coming back from the receiver: the space between green and white grows slowly. The slope of the green line is the maximum modem speed. After some time a disaster occurs, as shows the presence of a lot of noisy lines. After a disaster things start again and repeat. Let's zoom in.

Some details

Figure 2 shows only one step of the repetitive pattern. At first in the bottom left the emitter sends a small burst of packets. As soon as the number of packets sent but not yet acknowledged is 3, the emitter systematically waits for an acknowledgement before sending a new packet. Sending becomes "ACK-clocked" for a while. The green and white stay parallel during 5 packets, and the space between them, called the congestion window, stays at 3. After the successful transmission of these 5 packets, the emitter "doubles" the emission of the last one of them, sending 2 packets at the same time, and thus increasing the congestion window to 4. When 6 packets are transmitted successfully, the congestion window is incremented again and goes to 5, etc. This congestion window, representing the number of packets "in the network", goes on increasing logarithmically (in this case) until it reaches the area with red and purple lines. At this point, the green line does not increase anymore and purple lines increase instead. Purples lines are Selective acknowledgements. The receiver indicates to the emitter that he has correctly received everything under the green line, plus the purple part of the file. So the space between green and purple must be a lost packet. Its Retransmission is shown with the red "R".

What happened here, at this "purple" point, is that the number of packets "in the network" was too big, and some router decided to stop storing them for too long (about 1 second here), and threw some away. This is how IP routers react to congestion. After some chaotic transition phase that will not be detailed here, the emitter understands that it has pushed the network too far, and things starts again (upper right) with a more sensible congestion window of 3, just like in the beginning.

Longer queues

In the next plot, I have lengthen the size of the queue in the router to a dozen of packets. So dropping packets happen less often, and the progression of the congestion window can be seen even more clearly. As above, you can also download the trace in xplot format.

On this second trace we can see that drops now occur approximately every 10s instead of 4s, and that the packets stay at most 1.5s seconds in the queue instead of 0.5s.

Acknowledgements

Some hackers gave a hand or advice for setting up this small experiment. Benjamin Gaidioz and Mathieu Goutelle even gave two! Thanks to all of them.

Going further

This page is focused on one particular aspect of TCP. For instance, I talked only about steady state and average throughput but avoided transient behaviour. The field of problems that TCP is trying to solve is huge and goes much beyond that. Here below are two pointers, randomly choosen amongst an ocean of articles and books.

I think the book from Richard Stevens is the usually quoted as "the" reference.

Tiziana Ferrari has an built an nice bibliographic page, mainly focused on TCP performance.